Earlier this week, Forbes published a piece on ScaleFactor, a startup using AI to automate accounting, which shut down after raising $100m.

Here’s the heart of the issue covered in the story: “Instead of [AI] producing financial statements, dozens of accountants did most of it manually from ScaleFactor’s Austin headquarters or from an outsourcing office in the Philippines, according to former employees. Some customers say they received books filled with errors, and were forced to re-hire accountants, or clean up the mess themselves.“

The piece triggered additional reactions from the press around the general theme of “AI and snake oil”. Here’s Erin Griffith from the NY Times:

And here’s Lucinda Shen from Fortune:

Couple of caveats:

- Those are perfectly legitimate questions and it’s the role of the press to ask them, so this post is NOT another “VC against the press” rant. I’m just mentioning the above for context because they articulate well the concerns and open questions.

- I have never met ScaleFactor so I have zero insider knowledge of what may or may not have happened there. Therefore, this is not a piece above ScaleFactor specifically.

Now, this is whole “AI vs humans” topic is really interesting to me, as I’ve spent tons of times over the last few years with AI companies, and have seen those dynamics at play.

So I just wanted to share some what I have learned, and I’ll make two quick points in this post:

- Having humans involved in the early days of building an AI product is a feature, not a bug. Whether you can “fire” those humans over time or not determines whether you have a business or not

- Beyond that, there are important lessons to be learned about what use cases lend themselves well to building a sustainable AI business, or not.

Humans behind the scenes

One key thing to understand about AI startups is that they are not like your regular SaaS startups.

(In this post, “AI startups” are startups that leverage AI to power a business application, not providers of horizontal AI tools and technology)

For all the stories about commodization of AI, they remain deeply technical endeavors, which require substantial R&D effort.

A good portion of this R&D effort, especially early on, involves humans. Every AI company I’m familiar with has had a concept of “AI trainer”, at least in the early days. AI trainers are people that help build the data set, label the data, and/or resolve problems when the AI wasn’t able to succeed by itself. Sometimes, all of this happens by having humans mimic what the AI is supposed to do. Sometimes they do it in a sandbox, sometimes they do it in production. I wrote about this over three years ago: Debunking the “no human” myth in AI.

So in the early days of an AI startup, you often find that the product is powered in large part by humans, with a little bit of AI. I don’t know what the exact numbers, but perhaps 70% human to 30% AI wouldn’t be completely out of the question in the beginning.

Now, the clear goal is every AI startup is that this ratio will dramatically evolve over time, as the AI gets trained. Bit by bit, module by module, the idea to automate more and more of the process, and ultimately get to as close to 100% AI automation as possible.

No AI startup founder starts their company to be in the offshore services business. It’s a means to an end.

The problem is that AI progress is very capricious. You can get to early success (call it, 60% or 70% automation) reasonably quickly. But then you often get get stuck. Sometimes it’s temporary: you make no progress for a few months, but eventually the next breakthrough comes along. Sometimes you get stuck for ever.

From a business standpoint, of course, this makes all the difference in the world. With a bunch of humans involved in the early days, you have a terribly negative gross margin business. The hope is that over time you graduate to something that looks much more like a SaaS business in terms of overall economics (with the added benefit of some defensibility as your core AI is hard to replicate). But if you don’t dramatically improve automation and get rid of the humans in the loop over time, you don’t have a business.

While again I don’t have any knowledge of the facts other than what was reported in the press, I suspect this is what happened at ScaleFactor. The early investors financed the vision and bet that the company would be able to transition from humans to fully automated AI over time. As the company wasn’t able to automate enough, however, later stage investors looked at the business, saw the (presumably) negative gross margin, and all passed. The company then ran out of money.

Picking the right use case

For founders, perhaps the most interesting takeaway from this discussion is that, when it comes to building a startup leveraging AI automation at its core, not all business use cases are created equal. So you should pick wisely.

AI is a prediction technology that is powerful, but doesn’t get it right 100% of the time – even after all the training described above.

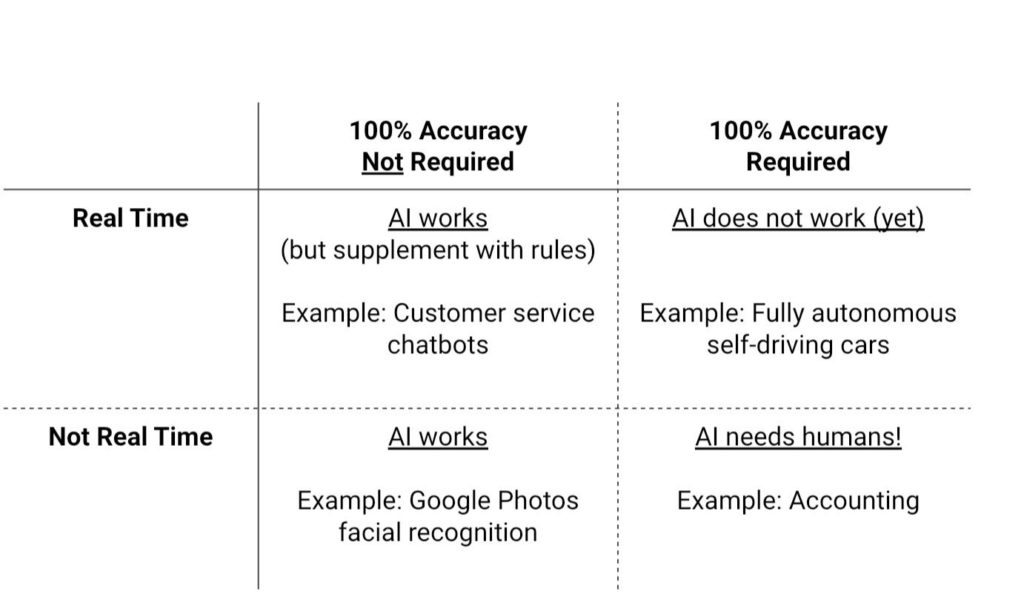

So it’s much tougher to solve a problem entirely with AI, when your product needs to be 100% successful 100% of the time. And it’s even tougher when the expectation is this 100% success happens in real time.

Here’s some simple (and probably imperfect) heuristics/framework to think about it:

Fully-autonomous self-driving is a good example of needing 100% success in real-time. An AI powered car would need to make a million decisions in real-time (stop, turn, avoid a pedestrian, etc.), and get it right 100% of time – otherwise people would literally die. In the current state of AI technology, you cannot fully automate the solution, humans are indispensable in cars 100% of the time.

At the other extreme, there are use cases where 80-90% accuracy is perfectly fine. For example if Google Photos says it’s a pic of little Suzie when it’s little Emma, it’s annoying but nobody gets hurt. Users also generally don’t have the expectation that this determination happens in real time. This is probably the easiest category to tackle for budding AI entrepreneurs. You can fully automate the solution with AI, no humans required.

The cases in between are the tricky ones.

If you think of customer service chatbots, they need to operate in real time, but they probably fall in the category of 80%-90% success is ok. Sure, it’s annoying when the chatbot gets your request wrong, but it’s great that it works most of the time, and certainly beats waiting 30 minutes on the phone until a customer service rep picks up your call. (Developers can also layer in some simple business rules to supplement the AI in cases where no prediction is required.)

The most difficult use case is precisely something like accounting, which ScaleFactor worked on. Here, you need 100% success 100% of the time – accounting is an exact science. But AI is not going to get you 100% success 100% of the time, we’re just not at that stage yet. So you’re going to need humans in the loop to do the “last mile” – check out whatever comes out of the AI system, and then correct it before you present it to the customer. This is possible in the case of an accounting business because end users typically don’t expect their financial statements to be prepared in real time (our experience with accountants has trained us otherwise).

This last use case is exactly where getting stuck on AI progress can make the difference between having a business or not. So it’s a tricky category to pick for entrepreneurs, wit a perilous journey ahead. “No guts, no glory” I guess, but everything else being equal, it probably makes sense to pick an easier problem.

_________

Note: all the above may be moot now that GPT-3 is going to solve all problems in the world 🙂

Great article. I enjoyed it. It’s also important to understand when AI is AI it can come in 2 main types. Symbolic AI which uses description logic knowledge representation & reasoning to enable the AI with Machine Understanding where the humans and AI have a shared understanding of the data, information or knowledge integrated into the AI. The other type is Non-symbolic AI which uses Machine Learning / Deep Learning to learn the patters in the data and tell us about the patterns. It’s these type main types of AI that are being combined in Hybrid AI (aka Co-symbolic AI / Neurosymbolic AI) combines the Machine Understanding and explainable results of Symbolic AI with the Machine Learning / Deep Learning of Non-symbolic AI. Symbolic AI is built by Knowledge Engineers and Ontologists where as Non-symbolic AI is built by Data Scientists with each of the 2 main types have very different use cases and technology stacks. It’s pretty important to understand these 2 different types of AI and the amazing synergies between them when combined.

Great thought. Had a very interesting chat with Gary Marcus recently about this type of hybrid approach: https://mattturck.com/marcus/

Yes, I’m familiar with Gary Marcus’s work and think he’s definitely on the right track. The spotlight has been on Non-symbolic AI such as Machine Learning and Deep Learning for the past decade but it’s worth keeping an eye on Symbolic AI as well. A lot of people still refer to Symbolic AI as “rules-based AI” which better describes Symbolic AI as it was from 1960-1990s. However Symbolic AI transformed during the AI winter that followed the 1990s and focused more on creating description logics knowledge representation and reasoning standards at the W3C (OWL, RDF, SPARQL, SHACL, SWRL, etc). Modern Symbolic AI is more focused on description logics ontologies that enable the AI with Machine Understanding and provide the IT standards needed to enable semantic interoperability for the fusion of different types and format of information. If you hear someone talking about ‘rules-based AI’ then take it with a grain of salt but if you hear someone talking about ‘description logics’ or ‘ontology-based AI’ then I’d give it more of my attention. Just look at biomedical and life sciences where nearly all our domain specific knowledge is captured in ontologies to make the knowledge understandable to both medical experts and machines. http://www.buffalo.edu/news/releases/2020/06/016.html