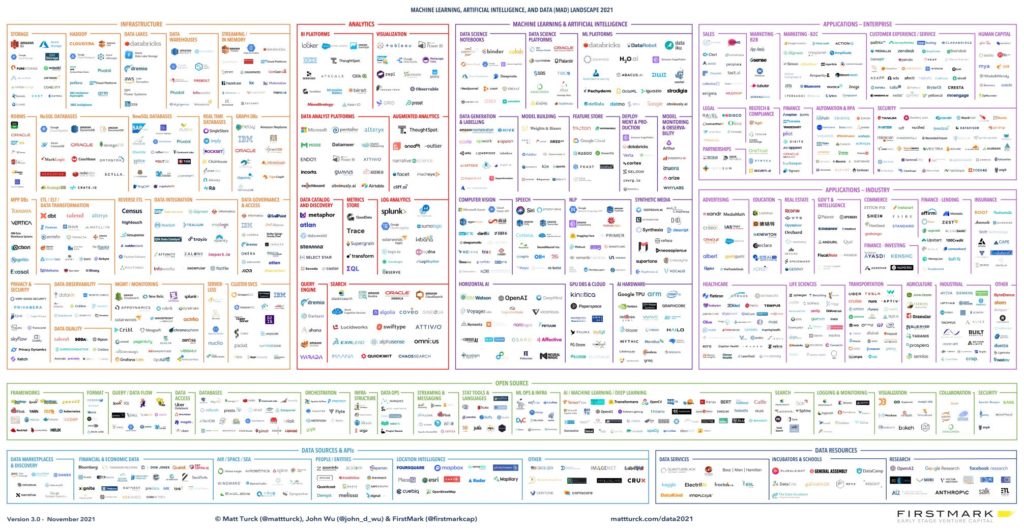

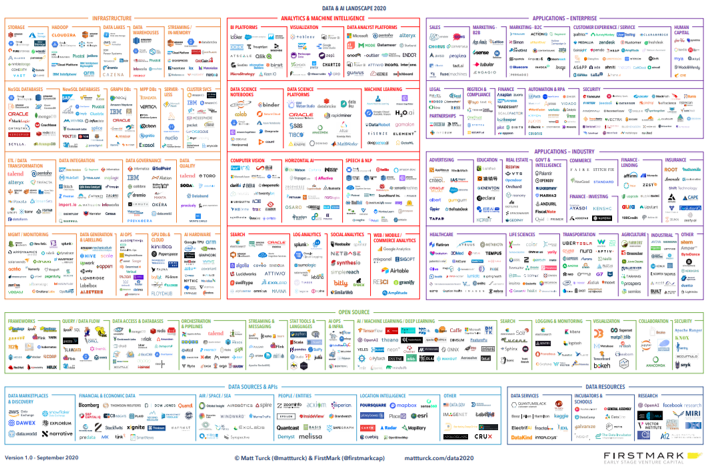

This is our tenth annual landscape and “state of the union” of the data, analytics, machine learning and AI ecosystem.

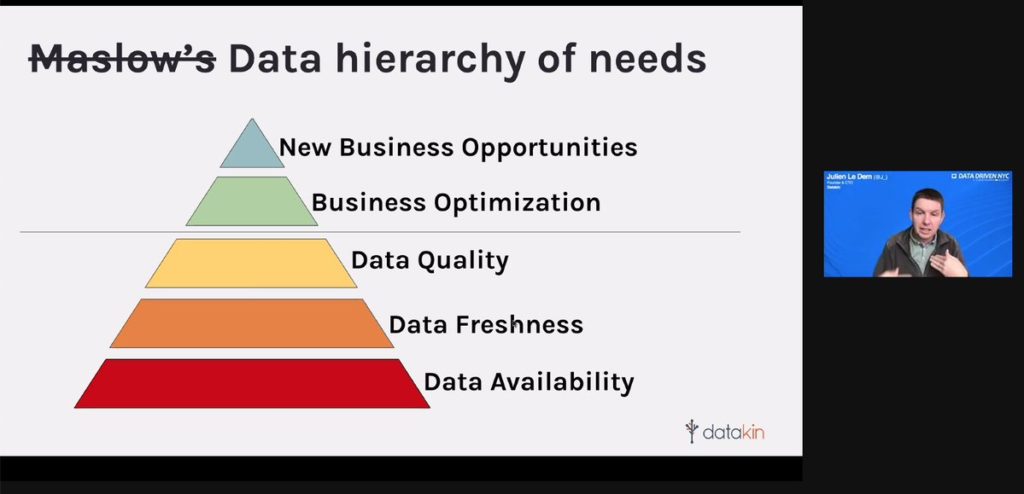

In 10+ years covering the space, things have never been as exciting and promising as they are today. All trends and subtrends we described over the years are coalescing: data has been digitized, in massive amounts; it can be stored, processed and analyzed fast and cheaply with modern tools; and most importantly, it can be fed to ever-more performing ML/AI models which can make sense of it, recognize patterns, make predictions based on it, and now generate text, code, images, sounds and videos.

The MAD (ML, AI & Data) ecosystem has gone from niche and technical, to mainstream. The paradigm shift seems to be accelerating with implications that go far beyond technical or even business matters, and impact society, geopolitics and perhaps the human condition.

There are still many chapters to write in the multi-decade megatrend, however. As every year, this post is an attempt at making sense of where we are currently, across products, companies and industry trends.

Here are the prior versions: 2012, 2014, 2016, 2017, 2018, 2019 (Part I and Part II), 2020, 2021 and 2023 (Part I, Part II, Part III, Part IV).

Our team this year was Aman Kabeer and Katie Mills (FirstMark), Jonathan Grana (Go Fractional) and Paolo Campos, major thanks to all. And a big thank you as well to CB Insights for providing the card data appearing in the interactive version.

This annual state of the union post is organized in three parts:

- Part: I: The landscape (PDF, Interactive version)

- Part II: 24 themes we’re thinking about in 2024

- Part III: Financings, M&A and IPOs